Key Findings

- In our recent UNHEARD VOICE investigation, we identified multiple fake personas using profile pictures consisting of likely GAN-generated fake faces and photos “stolen” from elsewhere on the internet.

- While we have previously observed actors using stolen photos and GAN faces to create fake personas, this is the first time we have seen the combination of the two techniques in an online influence operation.

- These “GAN-collages” are more difficult to detect than stolen or AI-generated profile pictures. Analysts must first assess that an image is worth investigating, then identify component portions of the image. This is more time-consuming than a simple reverse image search or common analysis techniques to detect AI-generated faces.

- We assess that this technique is currently too labor-intensive to scale across a large operation. In addition to requiring image editing skills, it also requires sourcing various collage component images. However, as free image editing tools improve and become more accessible, we believe IO actors will increasingly employ this technique in their operations.

Background

During our joint UNHEARD VOICE investigation, researchers at Graphika and the Stanford Internet Observatory identified multiple fake personas using profile pictures that were created using GAN-generated faces and images sourced from elsewhere on the internet. Often, this entailed editing a GAN-generated face onto the image of a model’s body and then superimposing this over an unrelated background. We refer to the resulting images as “GAN-collages” in this blog post.

This is the first time we have identified fake personas using images edited in this way as part of an online influence operation. The technique differs from previous operations we have analyzed, which typically “steal” profile pictures from unrelated social media accounts and online resources, or use fake faces created using artificial intelligence techniques, such as generative adversarial networks (GAN).

This blog outlines our analysis of one particular image from the takedown set to illustrate our findings.

Look a Little Bit Closer

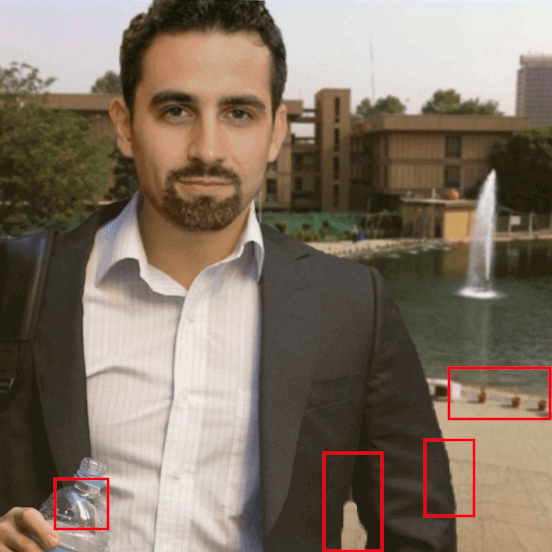

A fake persona active on both Instagram and Twitter in the Middle East cluster of the UNHEARD VOICE operation displayed the below profile picture. At first glance, this presents as a reasonably authentic image. However, closer inspection revealed several inconsistencies which required closer analysis. In no particular order, these inconsistencies include:

- The generally odd perspective of the image subject and background. Where is the subject standing? Is the subject floating?

- The strong light source reflected by the water bottle is not otherwise evident in the rest of the image.

- The gap between the subject’s body and left arm has unnatural rounded edges and includes a totally different color closer to the armpit.

- The outer edge of the left arm is choppy/pixelated, possibly due to use of image editing tools (e.g., the magnetic lasso tool in Photoshop).

- Shadows visible in the background image are inconsistent with shadows present on the image subject. While this could be explained by additional light sources pointed at the subject, the inconsistency noted in the second bullet makes this unlikely.

- The face appears to be higher resolution than either the body or the background. The background, in particular, is very blurry but not the type of blur expected from camera depth of field adjustments.

Nice Threads

Using a simple reverse image search, we were able to identify the body and background used to create this GAN-collage. The body image appears to have been lifted from a September 2012 blog post about men’s and women’s business fashion. The original image also displays the web address of an Australian business coaching firm, which appears to have been removed by the UNHEARD VOICE actors. We do not believe the fashion blog or business coaching firm have any additional links to the operation.

Baghdad Calling

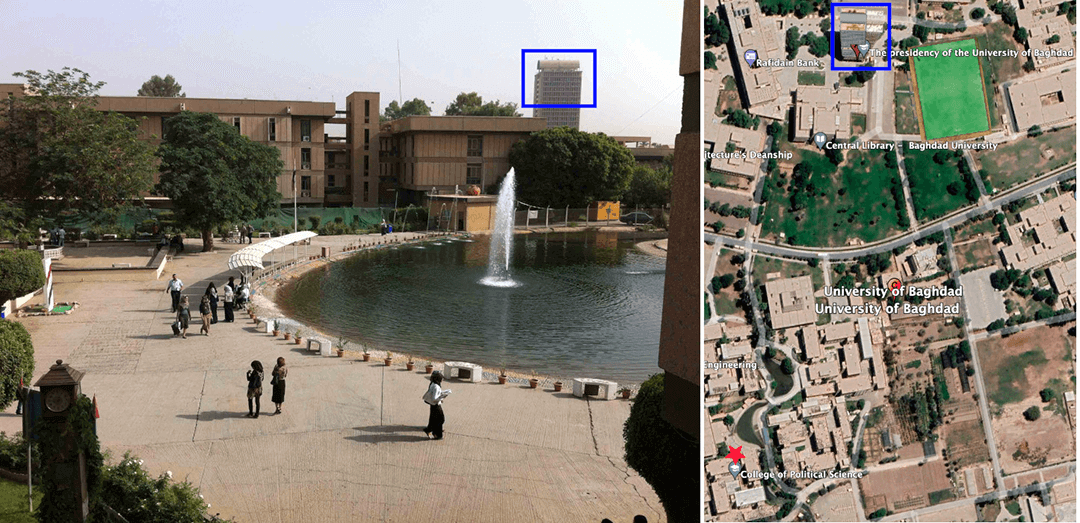

An additional reverse image search revealed that the background image was likely taken from a May 23, 2012, post on the University of Baghdad College of Political Science website. The same image is also available on a May 2012 Google Maps entry for the college, confirming the location. This background was almost certainly used in an attempt to boost the fake persona’s credibility and pass as an individual in the MENA region posting about regional news and geopolitical issues.

An examination of the higher-quality original image and the surrounding area provides a couple of interesting analytical points:

- The low-quality version of this image used as the GAN-collage background indicates this photo was likely zoomed in.

- There appear to be small walkways from which the photo could have been taken, but the perspective in the GAN-collage is unnatural.

Foiled A-GAN!

We believe that the face used by this fake persona was likely GAN-generated, a tactic we saw the same actors employ in different parts of the operation. The GAN-collage’s facial positioning and the low-resolution quality of the archived profile picture made standard GAN artifacts difficult to detect. These include central eye and mouth alignment, as well as irregularities in the hair, ears, and eyes. However, when we centered the face and overlaid four randomly generated GAN faces we found the placement of the eyes aligned almost exactly, suggesting the original image was also created using artificial intelligence techniques.

It is worth noting that the pasting and editing of the position and dimensions of the face made our usual detection methods less effective. This has implications for the open-source research community, including increased time required to determine whether a facial image is GAN-generated and reduced confidence in the following assessments.

Artistic License

We identified a further instance of the same GAN-collage being used as a profile picture by a fake Facebook account in the UNHEARD VOICE operation. On this occasion, the image has been overlaid with a filter to make it look like a sketched or painted illustration. After experimenting with different free-to-use image editing tools, we believe the account operators used either newprofilepic.com or toonme.com to create this effect. It is possible they did so in response to the #ToonMeChallenge on Instagram and Twitter in an attempt to present as an authentic persona.

![Results after uploading the original GAN-collage image to toonme[.]com (left) and newprofilepic[.]com (right)](/uploads/portrait-mode-image-08-illustrations-side-by-side.png?_cchid=44b11e37dc488e4b62c2562b013571e5)