“My people in the United States: This is real,” says the subject of many TikTok videos looking distinctly like U.S. music artist Jaden Smith, speaking Spanish and urging viewers to get in touch. The videos were uploaded by almost 30 TikTok accounts Graphika found impersonating Smith with deepfakes and claiming to offer financial giveaways.

As Generative AI tools supercharge scam content, simple text-based phishing is being replaced by high-fidelity synthetic media mimicking trusted figures and institutions. During March, we’ve identified more than 50 cases across YouTube, TikTok, and Facebook that represent a broader pattern we’re tracking: coordinated actors using AI-generated video and audio to simulate individuals who can entice victims into financial fraud. It’s a tactic that can also certainly be used to impersonate company executives, threatening both brand integrity and customer security.

Key Takeaways

-

Impersonation of authority: Scammers are using AI-altered and AI-generated imagery of recognizable public figures or trustworthy personas, such as military personnel, to lend legitimacy to financial scams.

-

Coordinated cross-platform tactics: Operations across YouTube, TikTok, and Facebook are using patriotic themes, celebrity likenesses, and institutional branding to lure victims.

-

Off-platform funneling: The scammers consistently direct users to private messaging services like WhatsApp or spoofed websites to harvest personal data and financial credentials.

Deepfake Jaden Smith on TikTok

The Smith videos are being uploaded by 27 Spanish-language TikTok accounts and one Instagram account exploiting Gen AI to create realistic video and audio. They promise financial giveaways to customers of major banks, including Chase, Wells Fargo, Citibank, and Santander, and usher victims to WhatsApp, where they’ll likely harvest account credentials.

One of the TikTok accounts impersonating Jaden Smith, showing multiple AI-generated videos using the actor's likeness.

What we found:

-

The TikTok usernames include @jayden.smith1665, @foundacionjadensmith, and @jadensmithfoundationn. Some bios describe a "charitable foundation" or claim Smith personally runs the account, exploiting his known philanthropic activities as a credibility hook.

-

The videos were created by adding motion to publicly available photos of Smith. In some cases, scammers overlaid footage of Smith on background images of banks to suggest he was actually there.

-

Banks targeted include Chase, Wells Fargo, Bank of America, Citibank, Capital One, Truist Bank, TD Bank, Santander, and U.S. Bank.

-

We found six WhatsApp numbers in the TikTok accounts’ bios and video descriptions.

-

By using a Spanish audio track, the posts may be intended to reach a demographic that could be less exposed to English-language warnings about this type of scam.

AI-Doctored Indian Finance Minister on YouTube

Graphika identified five URLs behind a YouTube ad campaign that showcases highly likely AI-manipulated footage of India's finance minister, Nirmala Sitharaman. The ads claim a new Indian government "investment policy" and promise viewers they can earn ₹85,000 (about $920) within 24 hours. India's government fact-checking body has confirmed the videos are fraudulent.

An Instagram user shared one of the videos impersonating Indian finance minister Nirmala Sitharaman.

What we found:

- Four of the five domains were registered between January and March 2026 via Namecheap and Dynadot. WHOIS details are private, which makes attribution difficult.

- One site, Inovationin[.]com, borrows the branding of Zerodha, an Indian brokerage firm, and includes a "sign up for free" call to action that’s likely meant to harvest personal or financial information.

- The YouTube video ads display the logos of major financial institutions, including SBI, Bank of Baroda, HDFC, ICICI Bank, and UPI.

- Screenshots of three ads showed a distinctive circular logo as the advertisers’ profile image, suggesting the ads were commissioned by the same actor or are part of a coordinated campaign.

The YouTube ads’ operators consistently used a circular cyan logo as their profile image, indicating coordination.

AI-Generated Military Personas on Facebook

Scammers likely based in Kosovo, Pakistan, and Indonesia are impersonating U.S. and Israeli military personnel on Facebook. Their objective seems to be luring users into romance scams or enticing them into paying for vaguely described "fan page" subscriptions. The content they’re uploading, almost certainly created with Generative AI, depicts conventionally attractive soldiers through images, video, and audio.

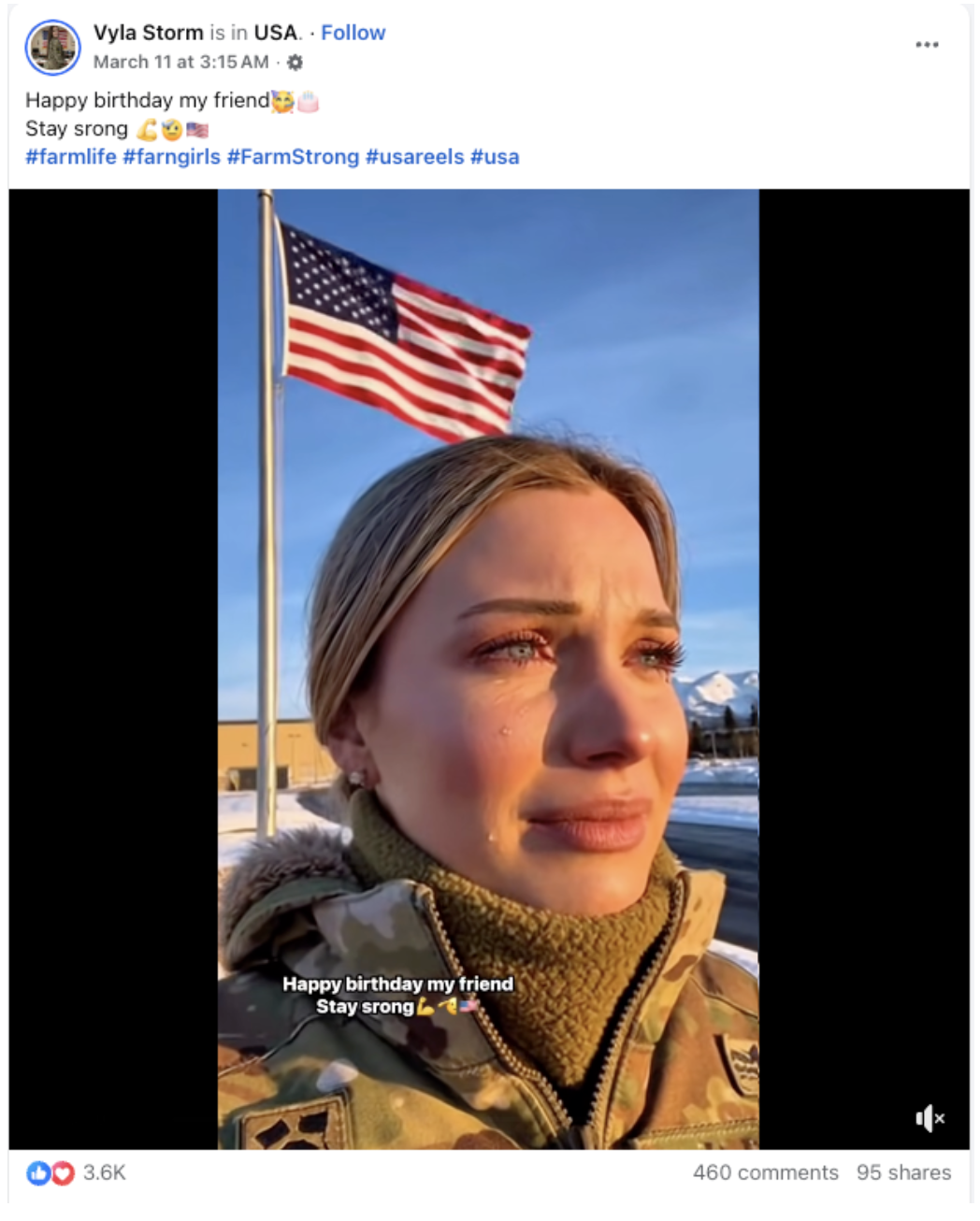

Screenshot of a Facebook Reels video by the account Vyla Storm, featuring an almost certainly AI-generated depiction of a U.S. soldier with #farngirls and "stay srong."

What we found:

-

Graphika identified 22 Facebook accounts using AI-generated content for the military personas. The platform’s page transparency data indicated that the accounts’ administrators are primarily located in Pakistan, Indonesia, or Kosovo.

-

Posts feature patriotic themes and emotionally charged appeals. They use phrases like "God bless America, and God bless everyone who presses the plus button" to encourage interaction.

-

Videos feature nearly identical AI-generated imagery and audio depicting "single soldier girls" asking viewers to contact them directly – a common romance scam tactic.

-

The same posts appear among the Facebook accounts. They feature coordination signals like the misspelled phrase "stay srong" and the consistently misspelled hashtag #farngirls alongside #FarmStrong.

Financial Scam Funnel Playbook

Across these three distinct operations, Graphika observed four stages:

- Dangling synthetic lure: AI-generated content mimicking a trusted authority figure is posted or advertised on a major platform. Using Gen AI significantly lowers the cost and skill requirements for producing compelling synthetic media at scale.

- Signaling credibility: Institutional logos, patriotic imagery, or fabricated "charitable foundation" bios increase perceived legitimacy by impersonating banks, government bodies, or military personnel.

- Pivoting off the platform: Posts direct victims to get in touch via WhatsApp or visit spoofed websites, moving them away from moderated social media platforms.

- Harvesting credentials: Phishing pages or direct social engineering ploys are designed to extract login credentials, financial details, or payments for fake subscriptions.

When combined with the rapid, privacy-shielded domain registration we observed – evading attribution and enabling fast replacement of taken-down assets – these stages represent consistent tactics that point to shared tooling or a common fraud-as-a-service playbook.

For financial-risk and brand-safety teams, we offer tailored assessments of how synthetic media is targeting your executives or customers. If you'd like to understand the infrastructure behind these campaigns or learn more about how our platform tracks these campaigns, book a demo.